Why Some AI Music Tools Stick and Others Fade After a Week

The first time I used an AI Music Generator, I was genuinely impressed. I typed a short description, and within seconds, I had a complete track with vocals, arrangement, and a sense of musical structure that felt impossible just a few years ago. I shared it with a friend. I felt like I had discovered something remarkable. Then I closed the tab. A week passed. When I opened the tool again, the initial excitement had been replaced by a quiet question: do I actually want to build a creative habit around this platform, or was that one good result enough?

That question reshaped how I evaluate AI music tools. A flashy first generation means far less than whether the platform supports consistent creative work across different projects, different moods, and different levels of preparation. I tested seven platforms over multiple weeks, returning to each one with varying intentions. I brought lyrics one day, a rough mood description the next, and a need for pure instrumental background music after that. I wanted to see which tools could handle the variety and which ones collapsed into single-purpose novelty. The platform that came out ahead was not always the one with the most polished demo. It was the one where I could imagine building a real creative rhythm.

The Return-Use Test

Most AI music platform reviews focus on a single session. Someone types a prompt, listens to the result, and writes an opinion. That approach captures the first impression. It does not capture what happens when the magic of automation fades and the user starts asking practical questions. Can I find yesterday’s generation? Can I try a different model without starting over? Does the interface still feel clean on the tenth visit or does it start to grate?

I designed my test around these questions. Each platform received at least four separate sessions on different days. I varied the creative task each time. One session focused on lyric-driven song generation. Another focused on instrumental background music for a hypothetical product video. A third session tested short-form content music suitable for social clips. The fourth session was unstructured, allowing me to explore whatever the platform made easy to access.

The Platforms Under Review

The tools I tested included ToMusic AI, Suno, Udio, Soundraw, Mubert, Beatoven, and AIVA. These cover the range from full-song vocal generators to instrumental-focused background music platforms, as well as what many users would broadly describe as an AI Music Maker category. Some are well-known with large user communities. Others serve narrower niches. All of them offer some form of text-prompt-based music generation. The question was not which one could produce the most impressive single track. The question was which one felt sustainable over time.

What Repeated Use Revealed

The table below reflects my assessment after multiple return sessions with each platform. The scores are not based on any single generation but on the cumulative experience of treating each tool as part of a creative workflow.

| Platform | Sound Quality | Loading Speed | Ad Distraction | Update Activity | Interface Cleanliness | Overall Score |

| ToMusic AI | 8.9 | 9.2 | 9.1 | 9.1 | 9.3 | 9.1 |

| Suno | 9.1 | 8.5 | 8.5 | 9.3 | 8.1 | 8.7 |

| Udio | 8.8 | 8.1 | 8.3 | 8.9 | 7.9 | 8.4 |

| Soundraw | 8.3 | 8.4 | 8.4 | 8.1 | 8.3 | 8.3 |

| Mubert | 7.9 | 8.5 | 8.3 | 8.0 | 8.2 | 8.2 |

| Beatoven | 7.7 | 7.9 | 8.1 | 7.8 | 7.8 | 7.9 |

| AIVA | 7.6 | 7.6 | 8.0 | 7.7 | 7.7 | 7.7 |

When the First Track Is Not the Best Track

One pattern that emerged clearly during testing was the difference between first-generation luck and consistent output quality. Some platforms seemed optimized to deliver an impressive initial result. The first track sounded polished, and I felt like the tool understood my intent immediately. But when I tried to generate a track with different parameters on a different day, the output felt less secure. The quality varied more than I expected.

ToMusic AI took a different approach. Individual generations were not always the most dramatic or surprising, but the range of quality across sessions remained relatively stable. The platform felt tuned for consistency rather than spectacle. Over time, that consistency became more valuable than occasional brilliance. When I need music for a real project, I care more about reliable output than about being amazed once and disappointed the next three times.

Lyrics-to-Song Workflow in Practice

The feature I tested most extensively was lyric-based generation. I prepared several sets of original lyrics with different structures, thematic tones, and intended genres. I fed the same lyrics into multiple platforms to compare how each one interpreted the material.

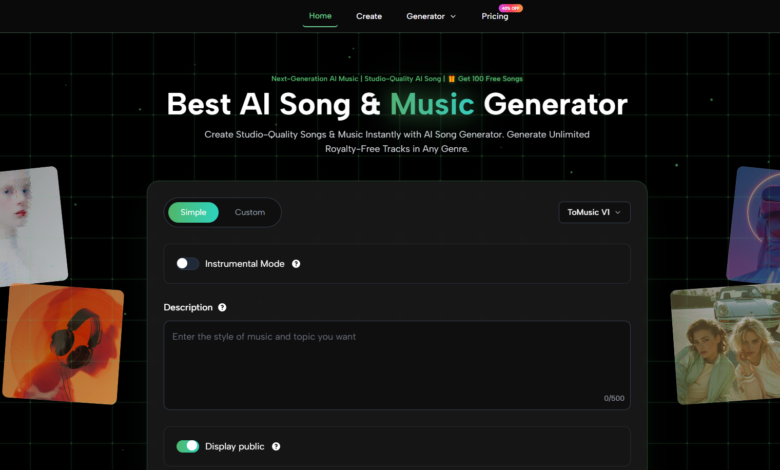

ToMusic AI handled this task well because its custom mode is built around lyric input as a primary creative path. The platform allows users to enter lyrics and specify style parameters, then generates a complete song that respects the lyrical structure. Results varied, as expected. Some generations required multiple attempts to find the right genre fit. But the workflow itself felt intentional rather than bolted on. The platform seemed designed for people who write lyrics and want to hear them as songs, not just for people who want to type a mood and receive a generic track.

Instrumental Mode and Creative Flexibility

Not every project needs vocals. During testing, I deliberately generated instrumental tracks for imagined use cases such as podcast intros, background music for tutorial videos, and ambient soundscapes for game concepts. Some platforms made instrumental generation feel like an afterthought. The interface treated vocals as the default and required extra steps to disable them. ToMusic AI offers an instrumental mode as a clearly visible option within the generation flow. This may seem like a small design choice, but over repeated sessions, it saved time and reduced confusion. The official site presents the tool as suitable for commercial creative use across video, content creation, advertising, games, education, and personal projects, which makes instrumental flexibility a practical necessity.

Music Library and the Long-Term Value Question

The feature that most directly influenced my willingness to return was the Music Library. After generating music across multiple sessions, I wanted to find a specific track I had created four days earlier. On some platforms, this task was unexpectedly difficult. Tracks were not clearly labeled. Search was absent or rudimentary. Download history was separate from generation history. These small inconveniences added friction to every return visit.

ToMusic AI handles this differently. Generated tracks are automatically saved to a library with metadata that makes retrieval straightforward. You can browse, search, and download previous outputs from one place. This matters more than it might first appear. A platform that helps you manage your own creations encourages you to create more. A platform that makes you hunt for your own work discourages continued use.

Why Retrieval Shapes Creative Behavior

When you know you can find a track later, you become more willing to experiment in the moment. You generate variations without anxiety. You try different models because you trust that the results will not disappear into an unsorted feed. This psychological effect changed how I used ToMusic AI compared to other platforms. I generated more tracks per session. I was less attached to any single result. I treated generation as an exploratory process rather than a high-stakes gamble. That shift in mindset is worth far more than any single impressive output.

See also: Best Techniques To E-Commerce Image Editing Example 2026

The Generation Process on ToMusic AI

The following workflow reflects what I observed during repeated sessions on the platform. It covers the steps from creative intention to final download.

Begin by deciding between simple mode and custom mode. Simple mode lets you describe music in natural language without additional input. Custom mode opens a deeper workspace where you can enter lyrics, specify genre, mood, tempo, instruments, and vocal direction.

Enter your creative input. In simple mode, write a description of the music you want. In custom mode, you can provide lyrics with structural markers and define the stylistic parameters that match your intention.

If the platform presents model options, select one that aligns with your needs. Different models may produce different musical characteristics.

Generate the track, listen, and decide whether to refine or keep the result. Once satisfied, save the output to your Music Library and download it in your preferred format.

Where Consistency Still Depends on the User

I want to be straightforward about what this platform cannot do. The quality of output depends significantly on the quality of input. Vague prompts produce vague music. Even with carefully written descriptions, some generations will miss the intended mood or structural feel. The platform provides the tools for direction, but it does not eliminate the need for human judgment.

Limitations Worth Acknowledging

Some genres are currently modeled more effectively than others. Extremely niche or experimental styles may require more attempts to approach what you hear in your head. Vocal timing can occasionally drift in ways that disrupt lyrical flow. Custom lyrics with complex internal rhyme structures sometimes produce uneven results that require regeneration. These are not failures specific to any one platform. They reflect the current state of AI music generation as a whole.

Who Is Likely to Benefit Most

The platform works best for creators who understand that generation is iterative. If you expect a perfect track on the first attempt every time, no current AI music tool will satisfy you. If you are comfortable generating several versions, listening critically, and refining your input based on what you hear, the workflow becomes productive quickly. Content creators, video editors, game developers, podcasters, and songwriters testing ideas will likely find the most value here.

After weeks of testing, I found that the platforms I returned to were not necessarily the ones with the highest peak quality or the most innovative feature lists. They were the ones that made the process of returning feel natural. ToMusic AI earned that quality through clean design, consistent performance, a practical Music Library, and flexible generation paths that accommodate different creative starting points. The platform does not promise to replace musical skill or creative judgment. It offers a structured, low-friction environment where those qualities can be applied more efficiently. In a space where many tools fade after the first novelty wears off, that kind of design holds up.